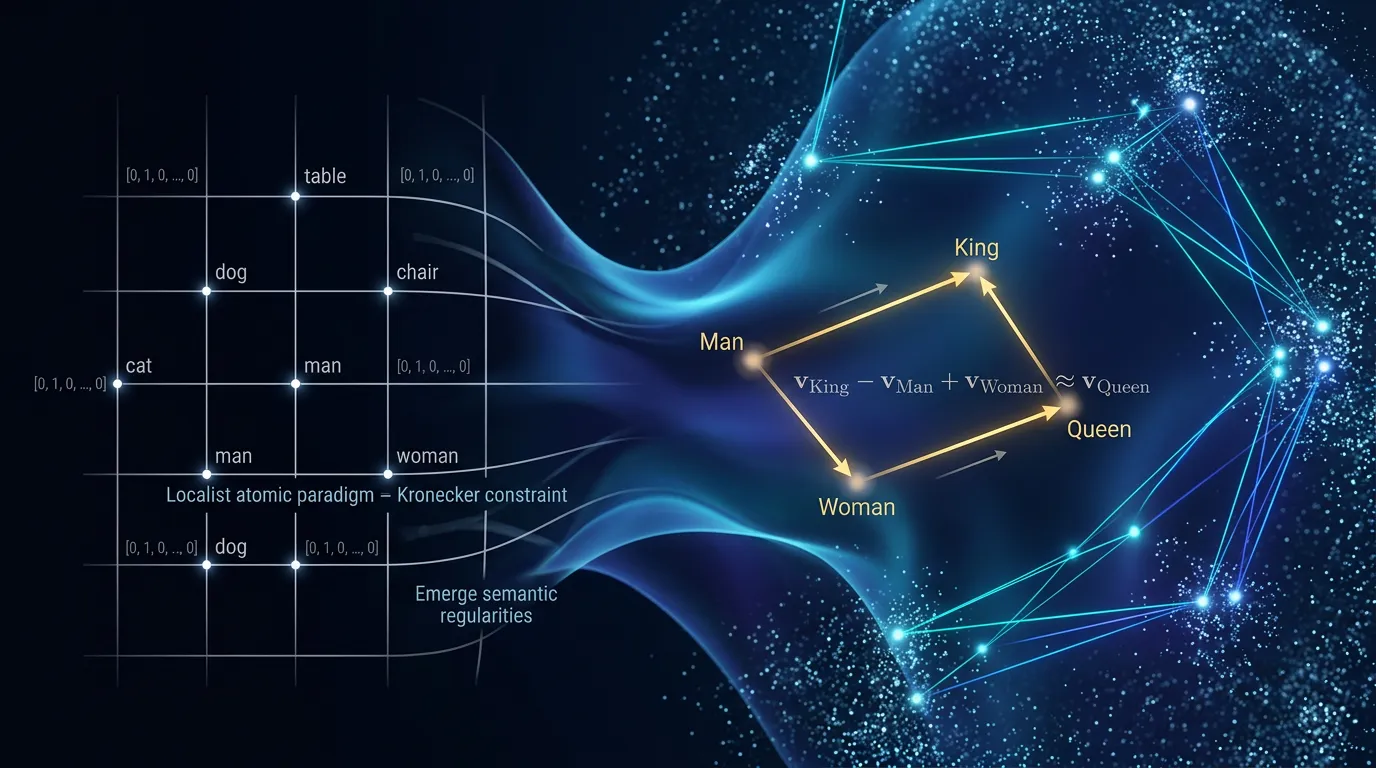

Word Embeddings: Beyond Atomic Units and One-Hot Encoding

Master the transition from discrete N-grams to distributed manifolds. Learn how Word2Vec uses linear algebra and vector offsets to capture semantic relations.

Content adapted from Efficient Estimation of Word Representations in Vector Space by Tomas Mikolov, Kai Chen, Greg Corrado, Jeffrey Dean.Original Source