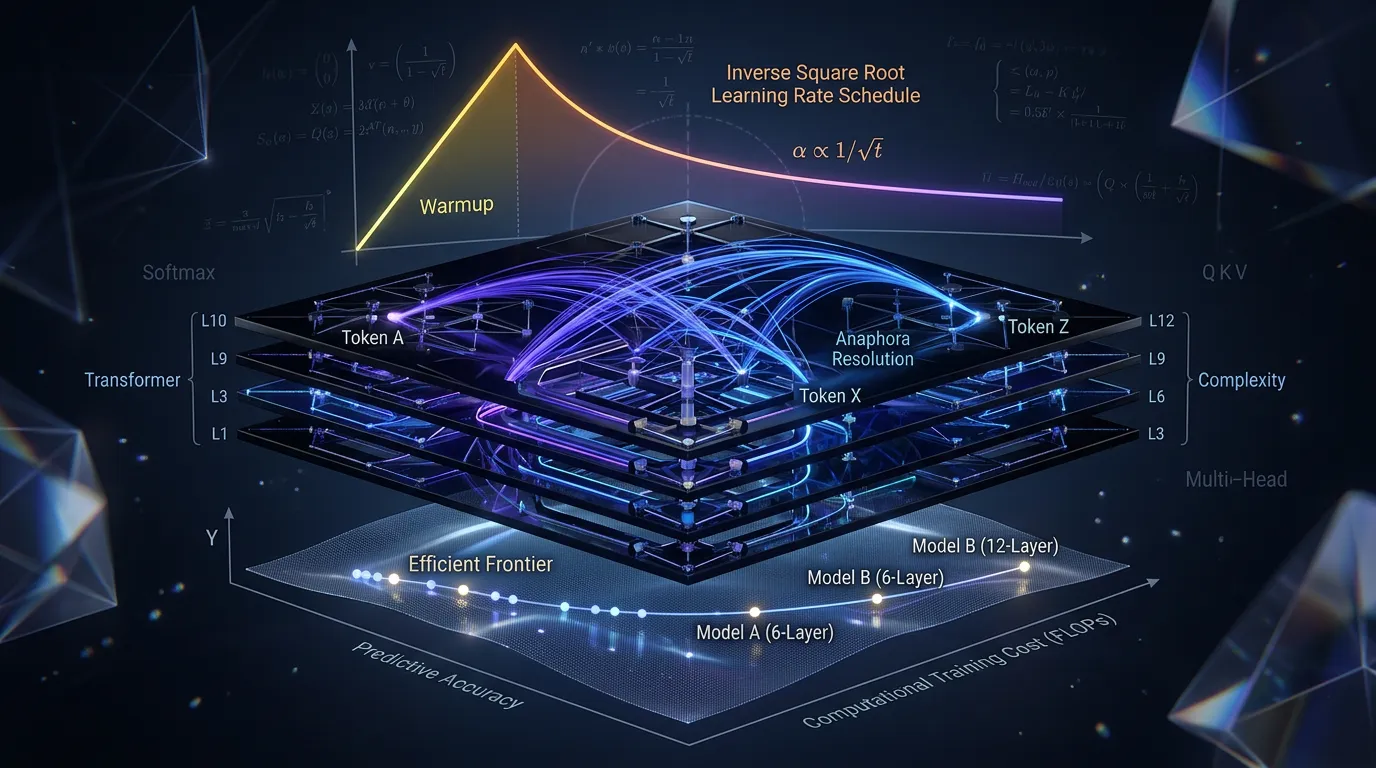

Transformer Performance: BLEU, FLOPs, and Optimization

Analyze Transformer Pareto efficiency on WMT benchmarks. Learn to optimize training with custom LR schedulers and visualize emergent linguistic structures.

Content adapted from Attention Is All You Need by Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, Illia Polosukhin.Original Source