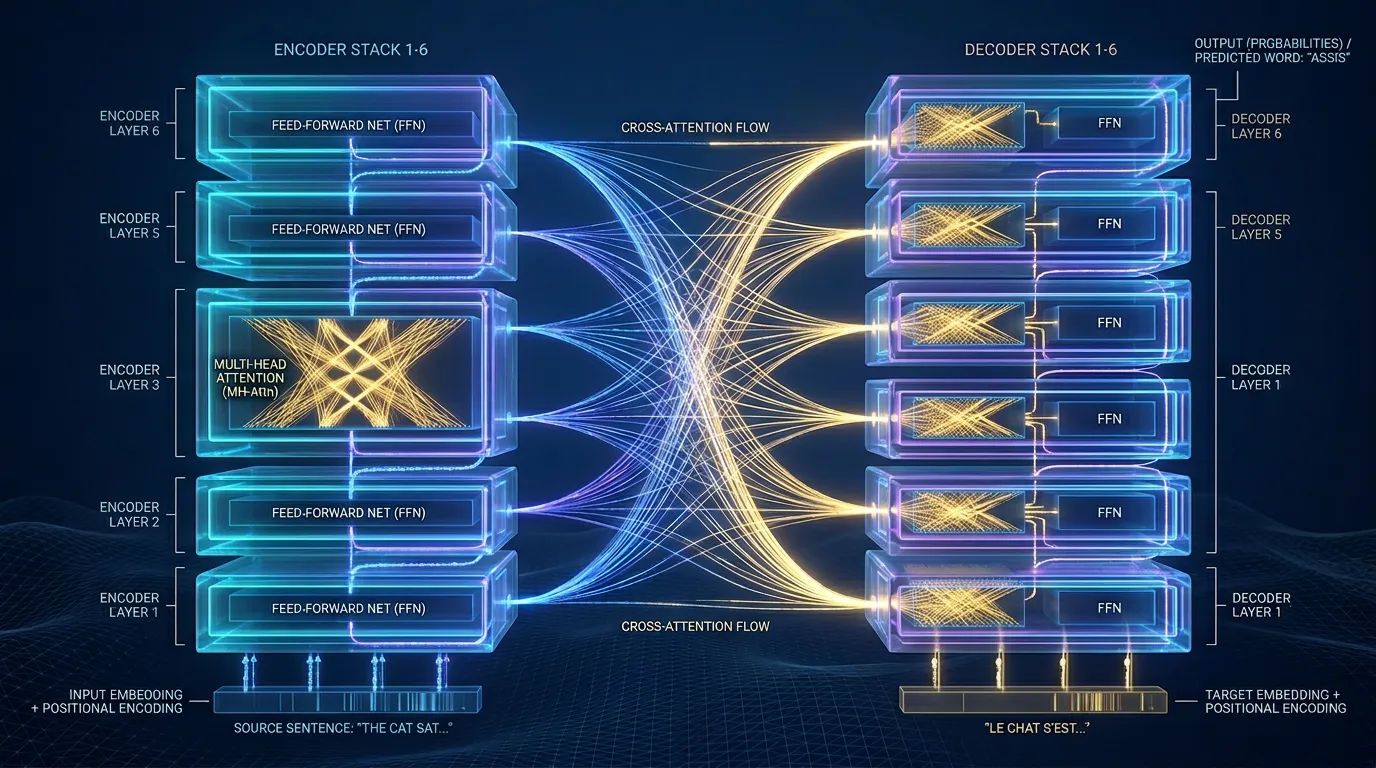

Transformer Macro-Architecture: Stacks and Sub-layers

Master the Transformer's structure. Explore N=6 layer stacks, causal masking, residual connections, FFN logic, and weight-tying strategies.

Content adapted from Attention Is All You Need by Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, Illia Polosukhin.Original Source