Topic 4 Magic With Vectors Semantic And Syntactic Introduction

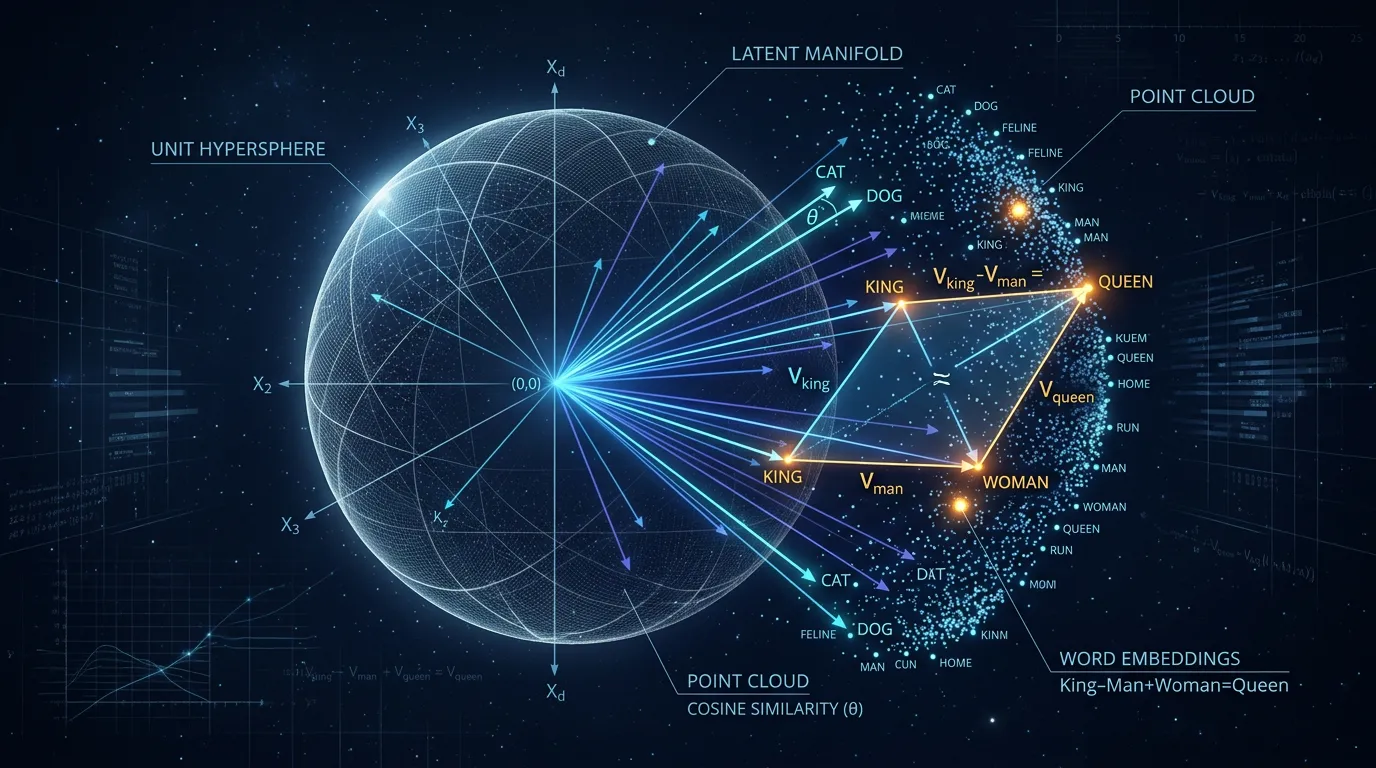

Master word embedding geometry. Learn why cosine similarity beats Euclidean distance and how to solve analogies using linear relational offsets in R^D.

Content adapted from Efficient Estimation of Word Representations in Vector Space by Tomas Mikolov, Kai Chen, Greg Corrado, Jeffrey Dean.Original Source