Topic 2 The Core Innovation Attention Mechanisms Introduction

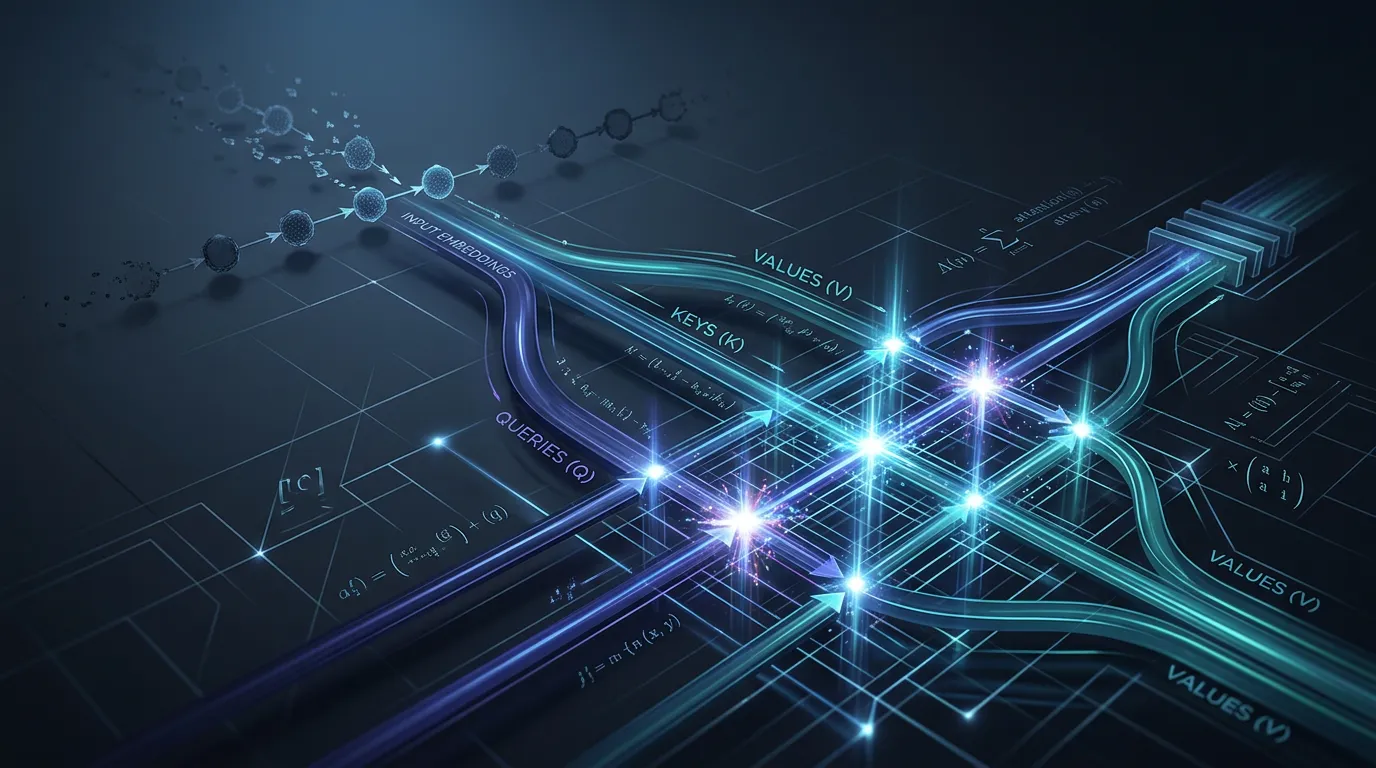

Master the transition from RNNs to Transformers. Learn how dot product similarity, Queries, and Keys enable O(1) sequence interaction and parallel computation.

Content adapted from Attention Is All You Need by Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, Illia Polosukhin.Original Source