Topic 2 The Core Innovation Attention Mechanisms Guided Practice

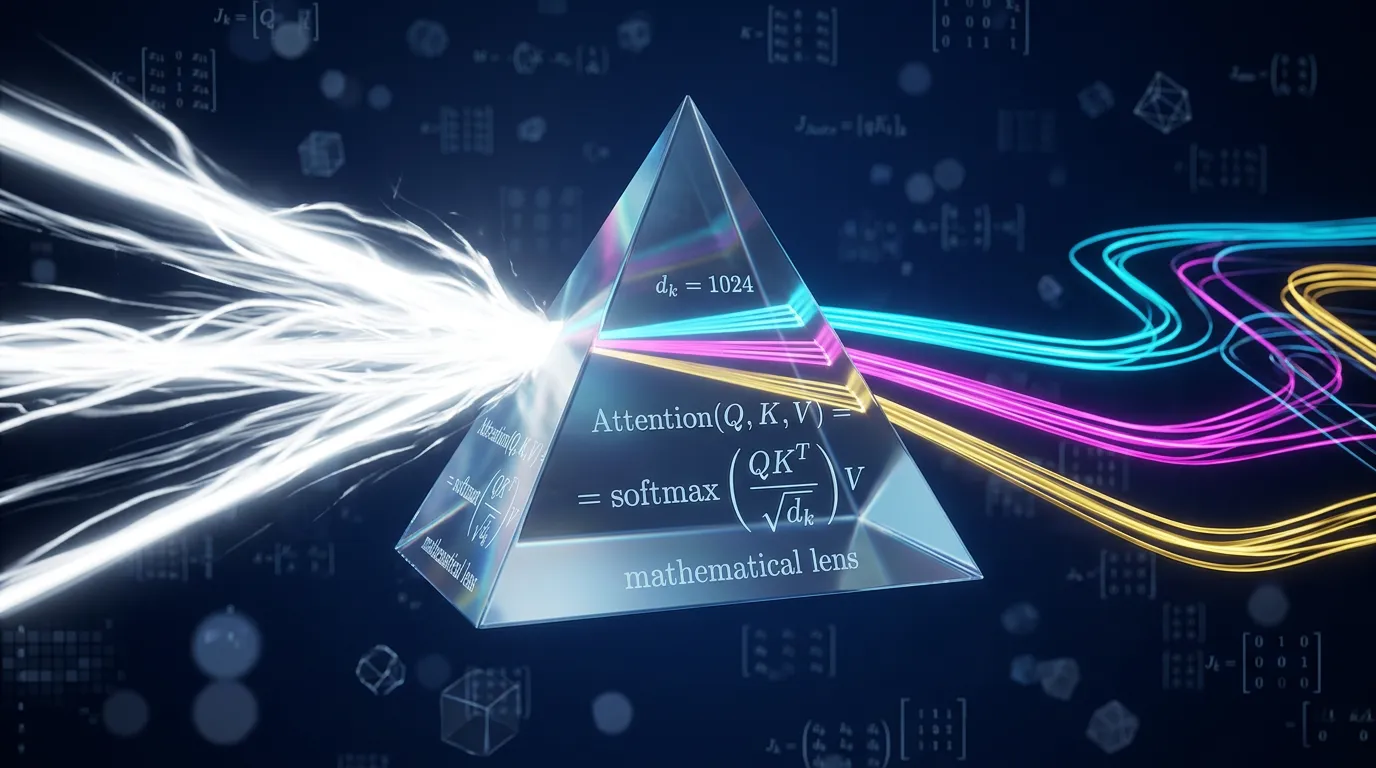

Derive the 1/sqrt(dk) scaling factor, analyze softmax saturation pathologies, and compare the computational complexity of Self-Attention vs. RNN architectures.

Content adapted from Attention Is All You Need by Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, Illia Polosukhin.Original Source