Sequence Transduction Desiderata: Attention vs. RNNs

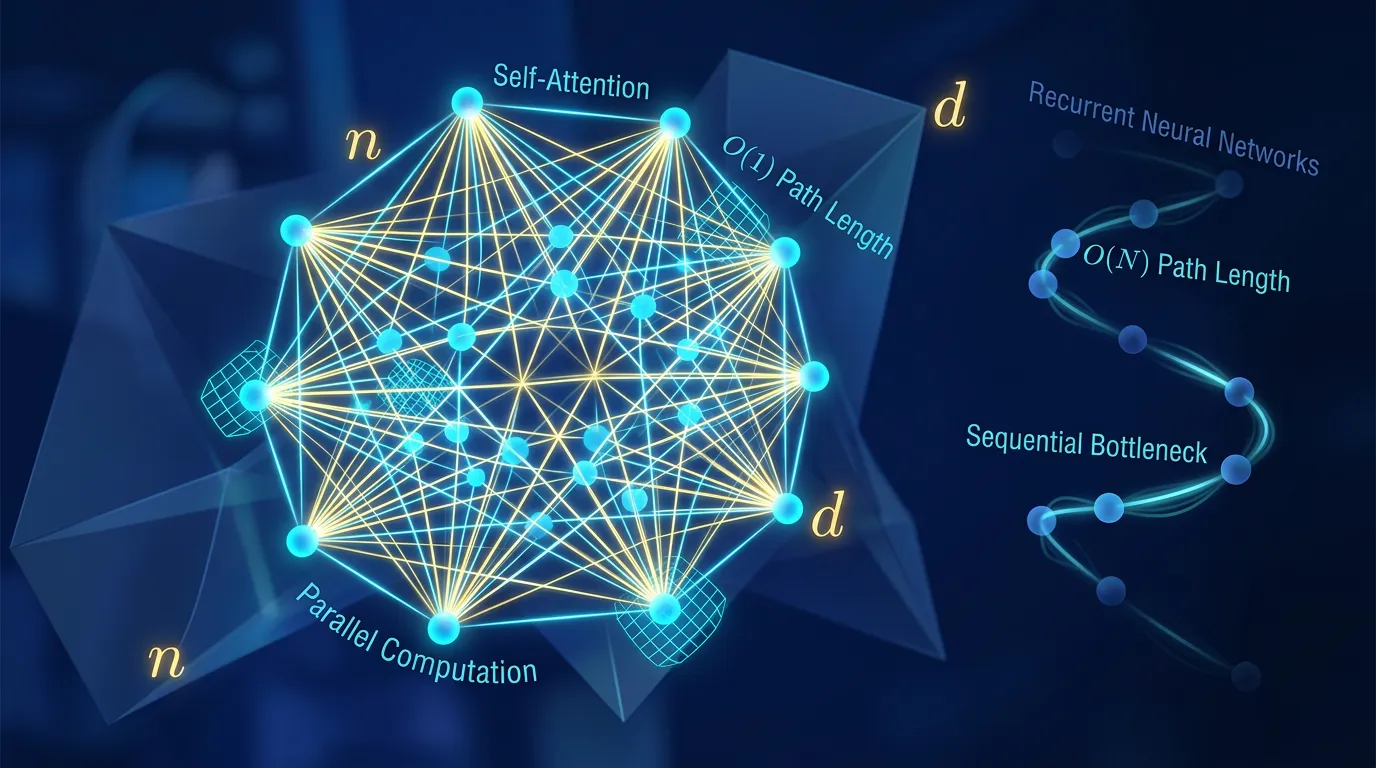

Compare Transformers, RNNs, and CNNs using formal desiderata like complexity, parallelizability, and path length to understand long-range dependency modeling.

Content adapted from Attention Is All You Need by Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, Illia Polosukhin.Original Source