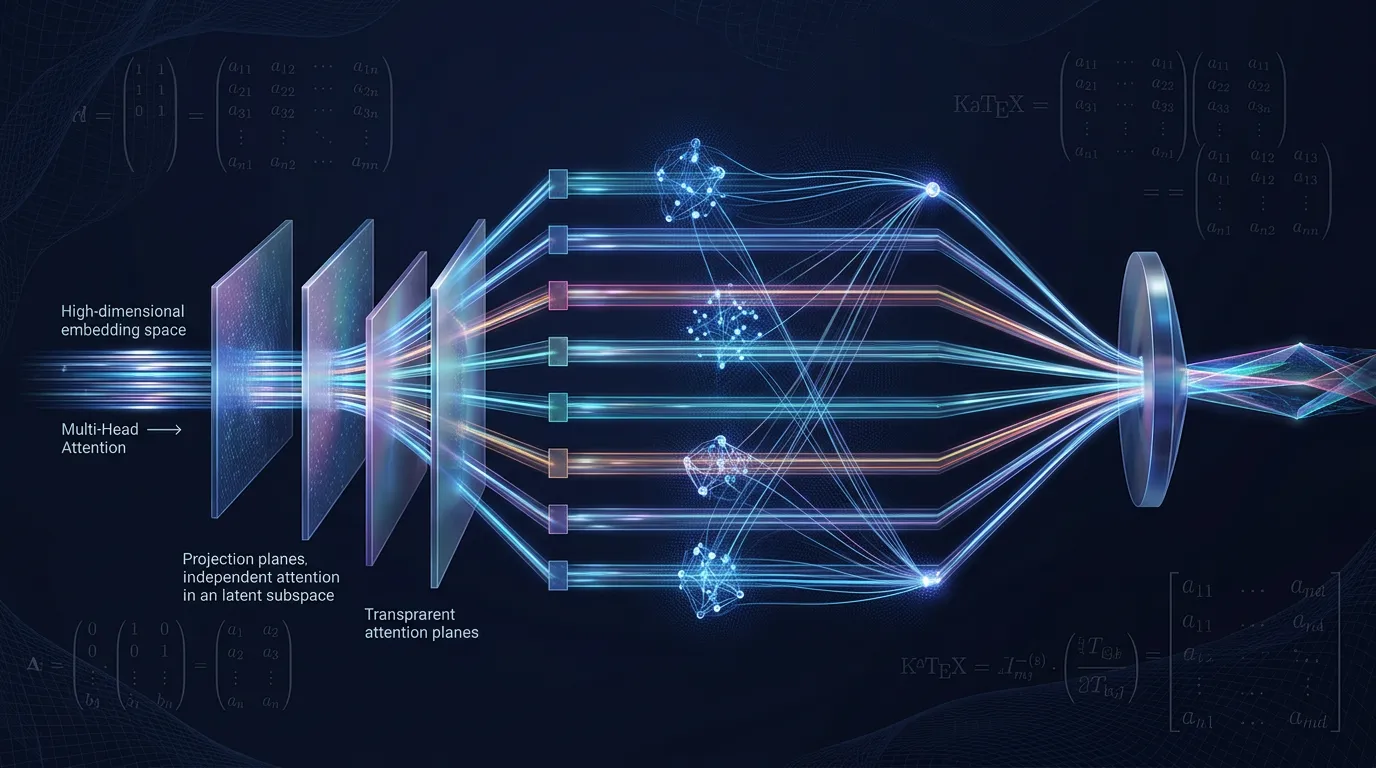

Multi-Head Attention: Parallel Latent Subspaces

Master the math of Multi-Head Attention. Learn how subspace decomposition captures complex relationships and analyze self, masked, and cross-attention variants.

Content adapted from Attention Is All You Need by Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, Illia Polosukhin.Original Source