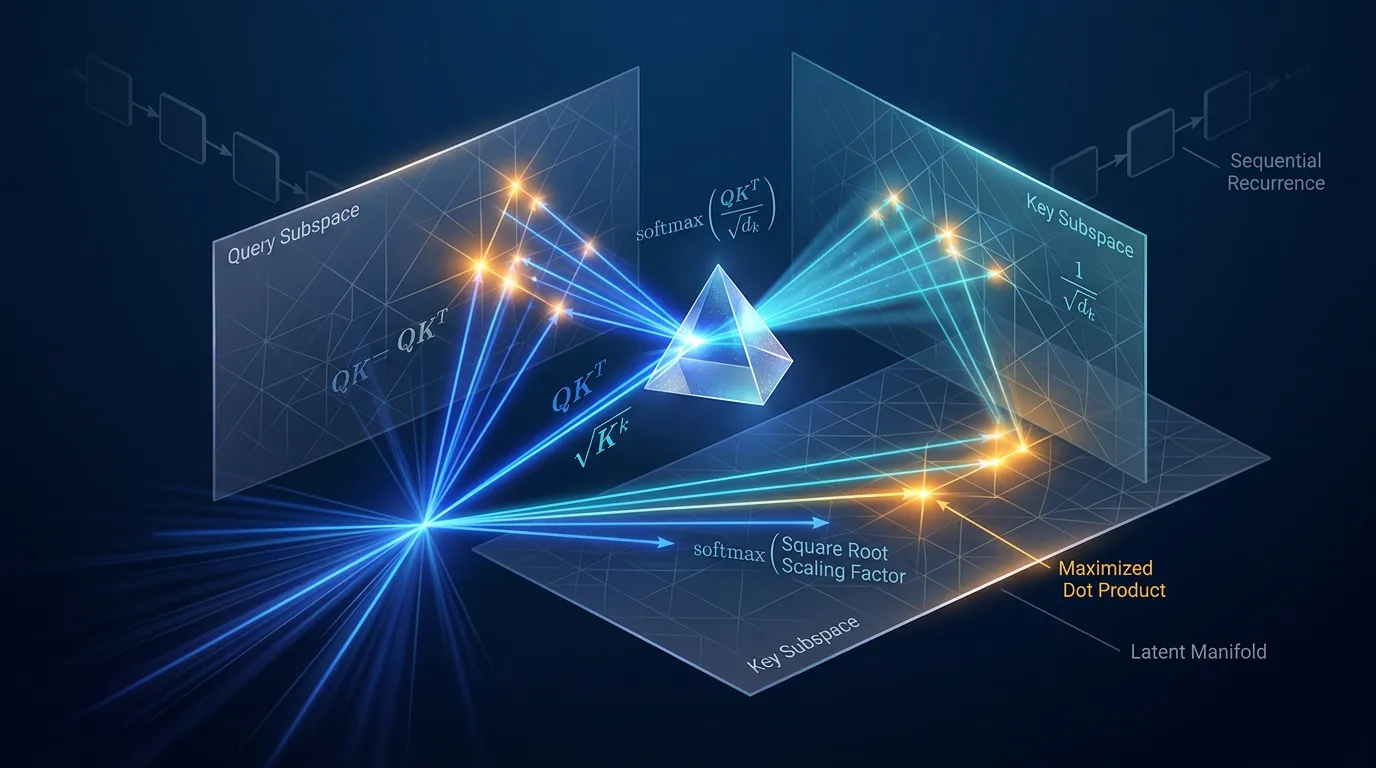

Dot-Product Attention: Geometry, Complexity, and Scaling

Master the algebra of alignment. Define dot products for high-dimensional manifolds, analyze O(1) interaction, and fix gradient vanishing via scaled attention.

Content adapted from Attention Is All You Need by Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, Illia Polosukhin.Original Source