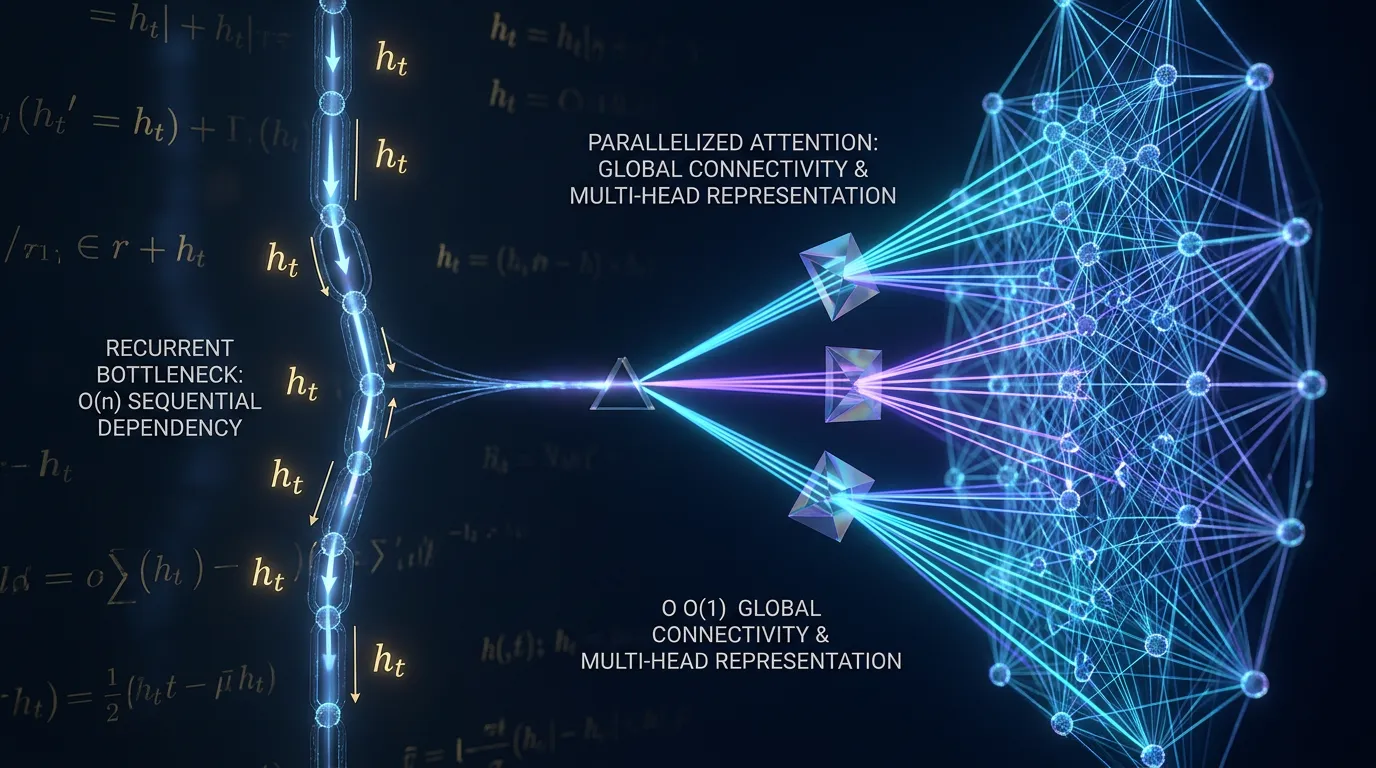

Analyzing RNN Bottlenecks and Multi-Head Attention

Explore RNN sequential bottlenecks, path length complexity, and how Multi-Head Attention solves the resolution trade-off for scalable deep learning models.

Content adapted from Attention Is All You Need by Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, Illia Polosukhin.Original Source